Now that OpenDroneMap has a new website, we have a blog to go with it. For the first post, we will repost something from https://smathermather.com on the latest upcoming changes to OpenDroneMap:

Problem Overview:

One of the greatest challenges with OpenDroneMap (ODM) is getting great results out of sparse data. I used to describe this as getting good data out of mediocre inputs, but this isn’t a fair descriptor, and here I’ll make a public apology: just because I have the time to fly with lots of overlap most of the time doesn’t mean it should be a requirement for everyone else. Ok. Now that that is off my chest, let’s talk about upcoming improvements which address this and more.

What does Better everything mean?

In the title, I say “Better everything”. The secret to better ODM data (and Piero Toffanin can take nearly all the credit for these improvements and discoveries of how) is to improve nearly every step in the pipeline. Let’s talk in general terms about what that means:

We can roughly break the pipeline into the following steps:

- Extract features

- Match features

- Structure from motion

- Dense matching / Multi-view stereo

- Meshing

- Texturing

- Final products

- Orthophoto

- Digital surface model

- etc.

There are other parts and pieces here — spatial referencing, undistorting of imagery, etc., but this is a good enough outline for discussion purposes.

As it happens, our underlying process is pretty good for steps 1 through 3. OpenSfM is our library of choice, and it handles the SfM functions quite favorably. At some point I would like to compare the positions of a really good RTK dataset to what we get from SfM, and maybe even do some testing with a synthetic dataset for a robust test of or SfM quality, but on the whole we don’t have notable deficiencies in this part of the tool.

Focusing on the parts to improve:

This just leaves us the other half… steps 4 through 7. The first step that is important here is dense matching. After a considerable amount of parameter testing, tuning, etc., we concluded that OpenSfM’s dense matching isn’t up to snuff yet. This isn’t a necessarily a critique: it’s one of the younger parts of the toolchain, and more mature FOSS solutions are out there already.

Multi-view Stereo:

So, we look to improve our dense matching / multi-view stereo. We are getting some favorable results by changing the underlying multi-view stereo library. These results compare favorably to Agisoft and Pix4D, two closed-source industry standards for UAV photogrammetry:

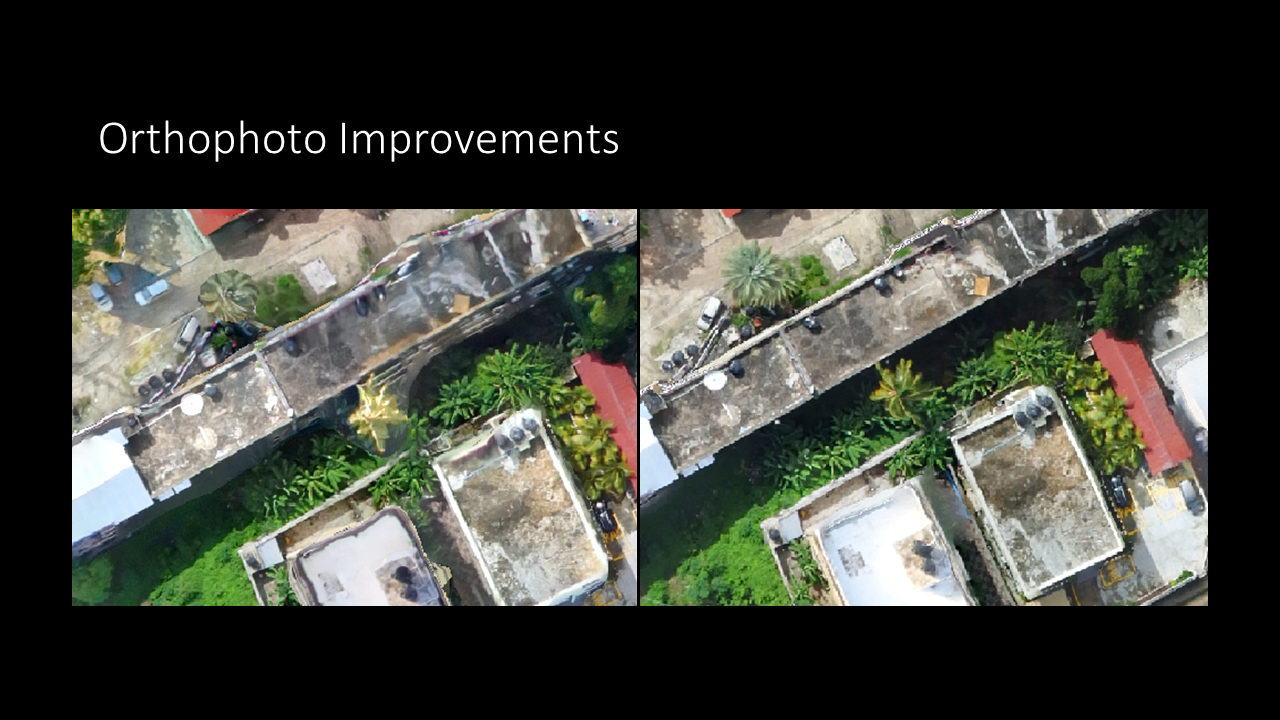

Meshing and Texturing:

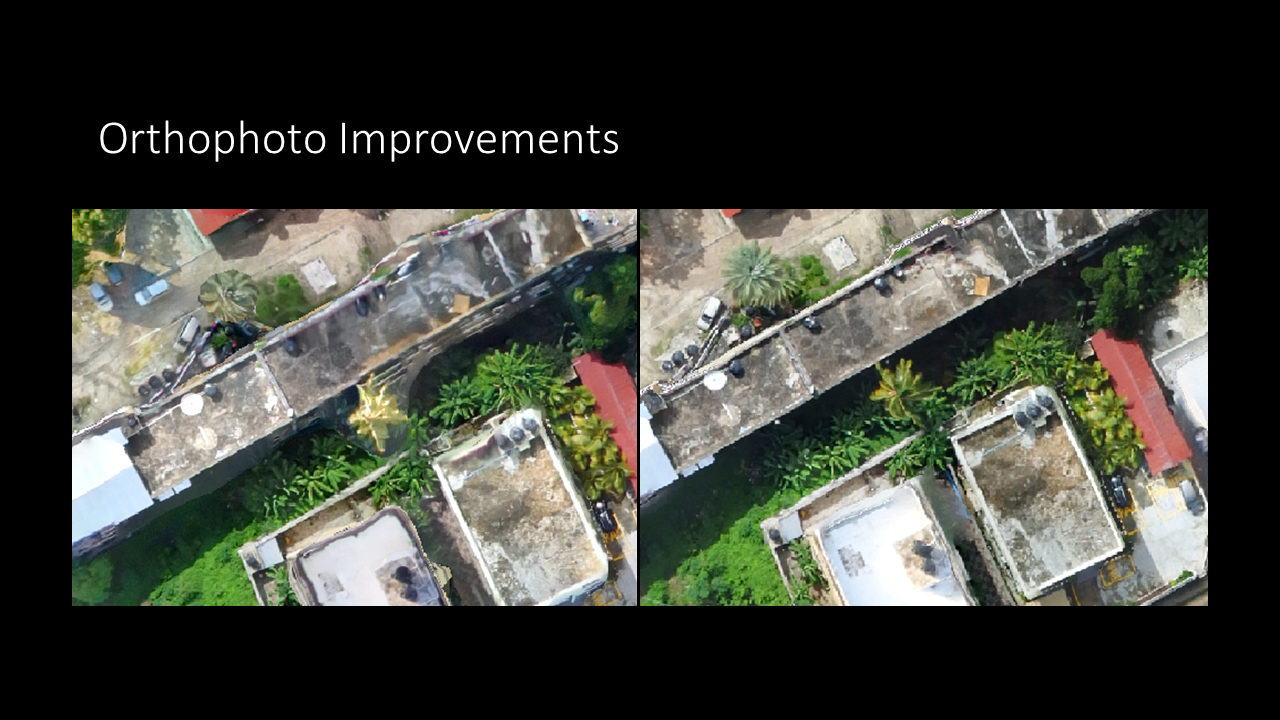

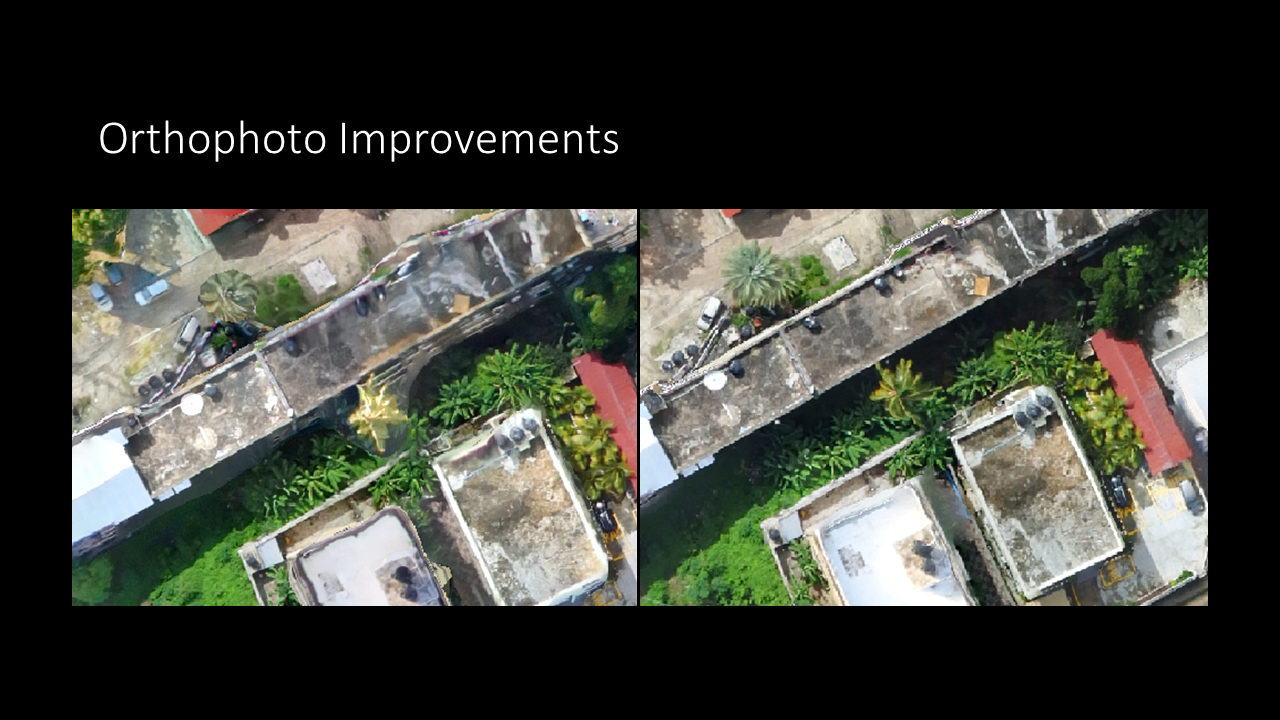

Now, for steps 5 through 6, we need an improved meshing procedure and improved texturing in order to get great results in our final output. Here, we can evaluate with orthophotos as a final product: